Documentation Index

Fetch the complete documentation index at: https://arivu.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Overview

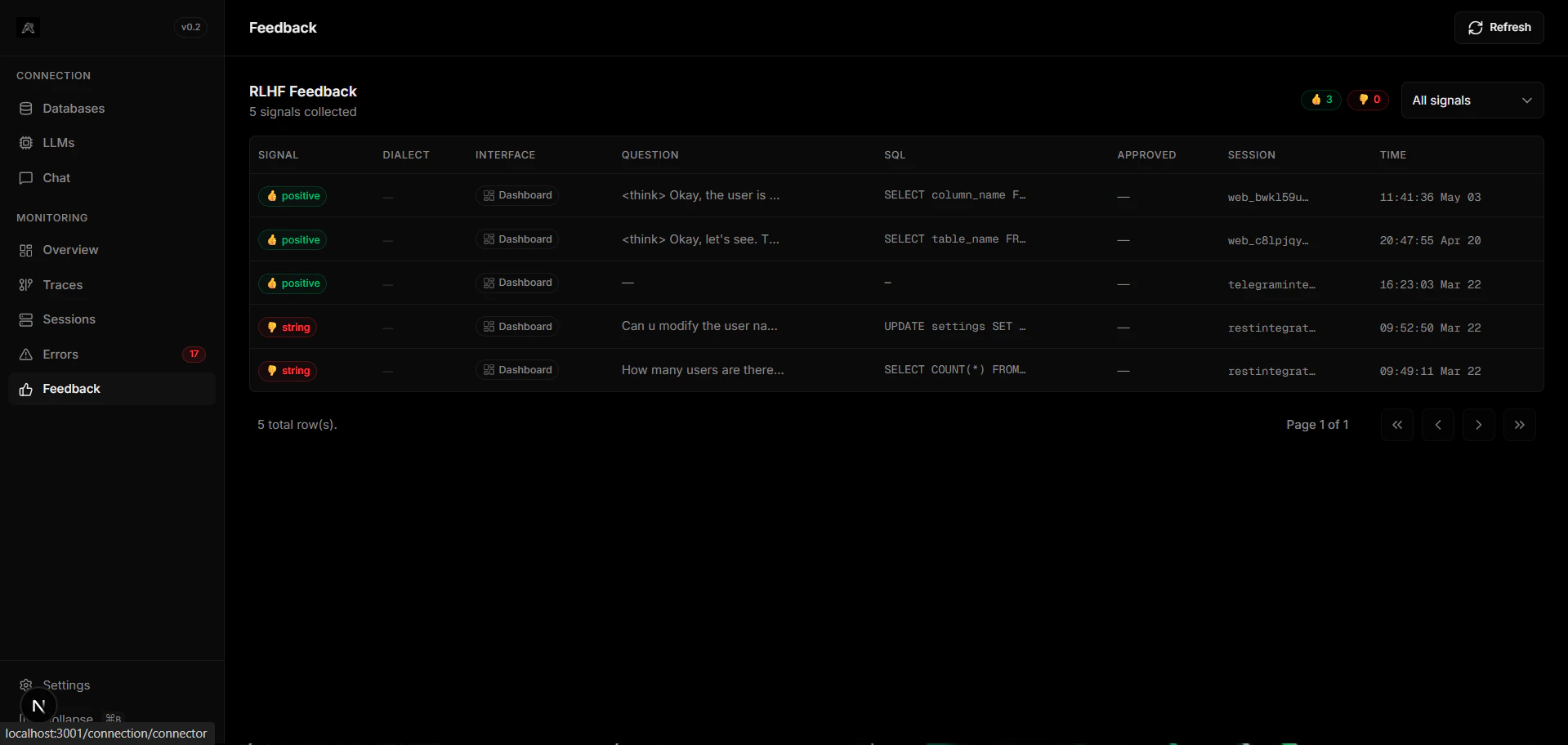

Reinforcement Learning from Human Feedback (RLHF) allows users to grade generation quality. Arivu natively captures thumb-ups/downs and descriptive feedback via chat integrations to shape prompt optimizations.Intent Rectification

Locate queries where the LLM misunderstood user nuance and track corrections over time.

Approval Queues

Destructive queries (UPDATE/DELETE) are parked for admin consent before execution.

How It Works

Signal Capture

Users provide feedback (positive/negative) via the chat UI, Telegram, or any integration adapter. The signal is attached to the query’s trace record.

Storage

RLHF signals persist in the memory backend (SQLite or Redis), indexed by

session_id and timestamp.The

rlhf_feedback LangGraph node executes entirely downstream of the response_generator. Injecting RLHF signals adds zero blocking latency to your conversational UX.