Documentation Index

Fetch the complete documentation index at: https://arivu.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Overview

Groq operates using an LPU™ (Language Processing Unit) architecture, specifically engineered to deliver real-time AI capabilities with latency far below traditional GPU deployments.Unmatched Speed

Hundreds of tokens per second for popular models like Llama 3.

Open Source Models

First-class support for Llama, Mixtral, and Gemma model variants.

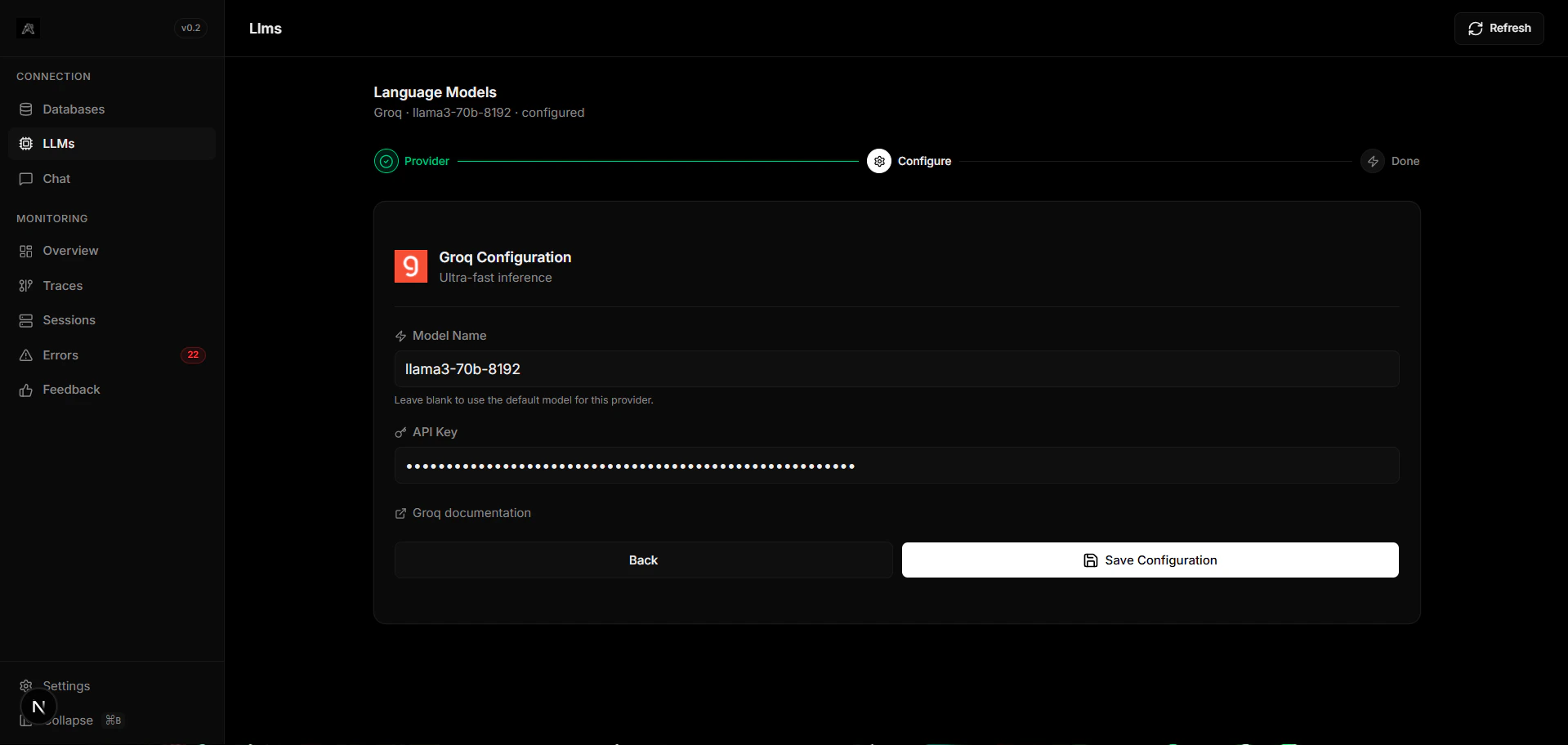

Configuration

Get your API Key

Sign up on the GroqCloud Console.

Because of Groq’s incredible generation speed, it is highly recommended for streaming applications (e.g. voice or real-time chatbots).