Documentation Index

Fetch the complete documentation index at: https://arivu.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Overview

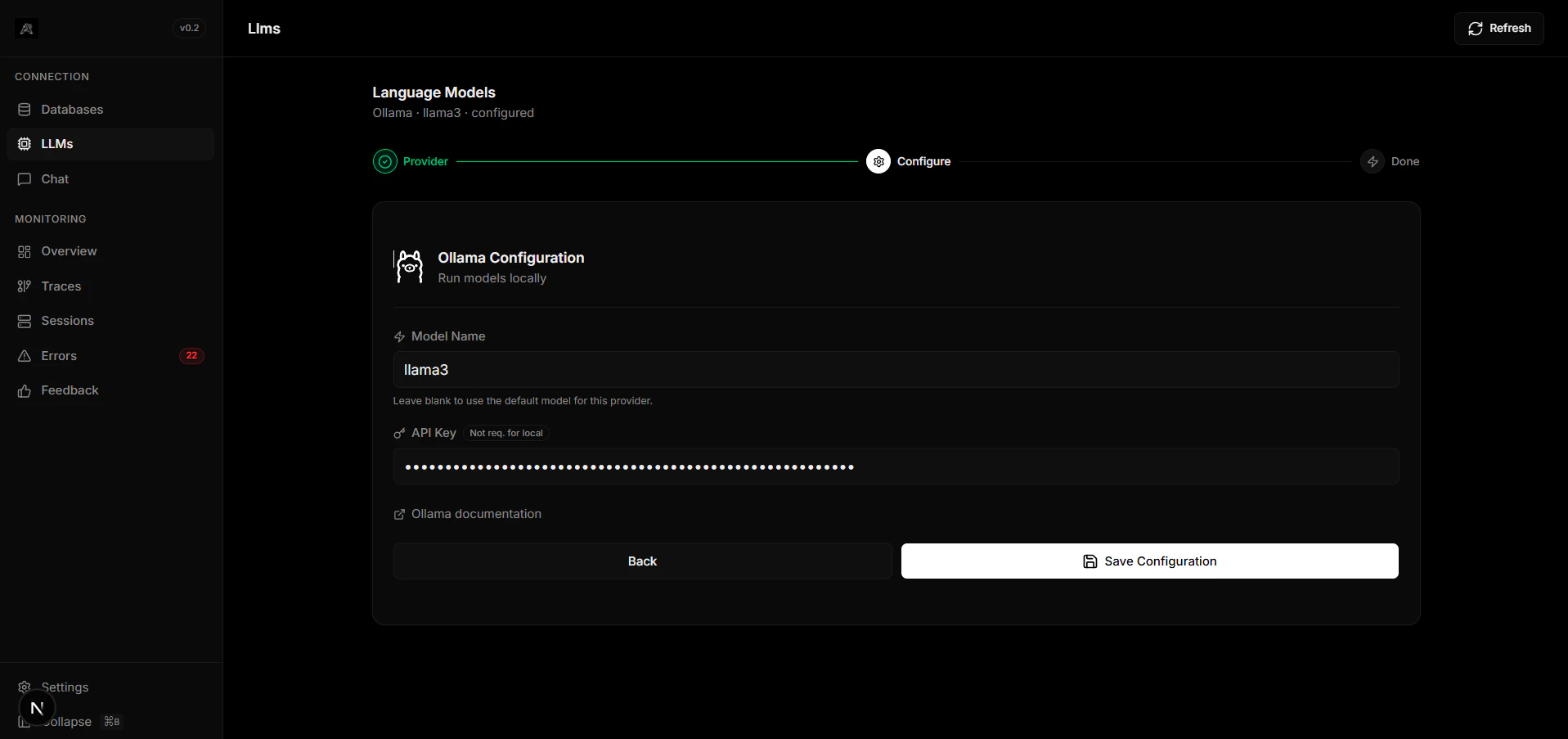

Ollama is an open-source tool that allows you to run large language models locally. It is the premier choice for organizations that need strict data privacy or completely offline AI capabilities.Privacy First

Your data never leaves your environment.

Ease of Use

Execute models with a simple

ollama run <model> command.Configuration

Install Ollama

Download and install the runner from Ollama’s website, and pull a model (e.g.,

ollama pull llama3).